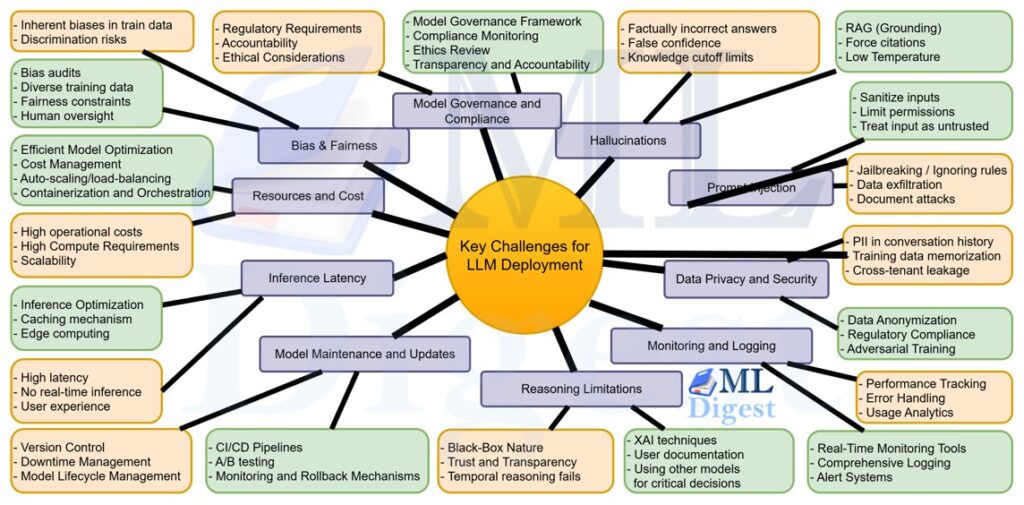

Transitioning LLM models from development to production introduces a range of challenges that organizations must address to ensure successful and sustainable deployment. Below are some of the primary challenges and potential mitigation strategies.

1. Computational Resources and Cost

Challenges

- High Compute Requirements: LLMs, especially state-of-the-art models like GPT-4, require significant computational power for both training and inference. This necessitates powerful hardware, including GPUs or TPUs, which can be expensive.

- Scalability: As user demand grows, scaling the infrastructure to handle increased loads without compromising performance can be challenging.

- Operational Costs: Continuous operation, including energy consumption and maintenance of hardware, can lead to substantial ongoing expenses.

Mitigation Strategies

- Efficient Model Optimization: Utilize techniques such as model pruning, quantization, and knowledge distillation to reduce model size and computational demands.

- Cloud Services: Leverage cloud-based solutions that offer scalable resources, allowing for elastic scaling based on demand.

- Cost Management: Implement cost-monitoring tools and choose cost-effective instances or spot pricing options where appropriate.

2. Latency and Real-Time Performance

Challenges

- Response Time: LLMs can exhibit high latency, making real-time applications (e.g., chatbots, interactive systems) sluggish.

- User Experience: Delays in responses can negatively impact user satisfaction and engagement.

Mitigation Strategies

- Inference Optimization: Use optimized libraries and frameworks (e.g., TensorRT, ONNX Runtime) to accelerate model inference.

- Caching Mechanisms: Implement caching for frequently requested responses or computations to reduce redundant processing.

- Edge Computing: Deploy models closer to the end-user using edge devices to minimize latency. (SLM)

3. Scalability

Challenges

- Handling Load Spikes: Sudden increases in user requests can overwhelm the system, leading to crashes or degraded performance.

- Distributed Deployment: Managing and synchronizing models across multiple servers or data centers adds complexity.

Mitigation Strategies

- Auto-Scaling: Implement auto-scaling policies that dynamically adjust resources based on traffic patterns.

- Load Balancing: Use load balancers to distribute incoming requests evenly across servers, preventing bottlenecks.

- Containerization and Orchestration: Utilize containers (e.g., Docker) and orchestration tools (e.g., Kubernetes) to manage scalable deployments efficiently.

4. Model Maintenance and Updates

Challenges

- Continuous Improvement: Regularly updating models to incorporate new data, fix issues, or improve performance requires ongoing effort.

- Version Control: Managing different versions of models and ensuring consistency across deployments can be complex.

- Downtime Management: Deploying updates without causing service interruptions necessitates careful planning.

Mitigation Strategies

- CI/CD Pipelines for ML: Establish continuous integration and continuous deployment pipelines tailored for machine learning to streamline updates.

- A/B Testing: Implement A/B testing to safely roll out updates and measure their impact before full deployment.

- Monitoring and Rollback Mechanisms: Ensure robust monitoring to detect issues early and maintain the ability to quickly revert to previous stable versions if necessary.

5. Data Privacy and Security

Challenges

- Sensitive Information Handling: LLMs may process and inadvertently expose sensitive user data.

- Compliance: Adhering to data protection regulations (e.g., GDPR, CCPA) is mandatory and can be complex.

- Vulnerability to Attacks: Systems may be susceptible to adversarial attacks, data poisoning, or malicious inputs aimed at exploiting the model.

Mitigation Strategies

- Data Anonymization: Ensure that training and input data are anonymized to protect user privacy.

- Secure Infrastructure: Implement robust security measures, including encryption, access controls, and regular security audits.

- Regulatory Compliance: Stay informed about relevant regulations and incorporate compliance into all stages of deployment.

- Adversarial Training: Train models to be resilient against known types of attacks and continuously evaluate their security posture.

6. Bias and Fairness

Challenges

- Inherent Biases: LLMs trained on large, diverse datasets may inherit and amplify societal biases present in the data.

- Discrimination Risks: Biased outputs can lead to unfair treatment of certain user groups, causing ethical and reputational issues.

Mitigation Strategies

- Bias Audits: Regularly audit models for biases by testing them against diverse and representative datasets.

- Diverse Training Data: Curate balanced and inclusive training datasets to minimize the introduction of biases.

- Fairness Constraints: Incorporate fairness constraints and objective functions during training to promote equitable outcomes.

- Human Oversight: Implement review processes where human evaluators assess and correct biased outputs as needed.

7. Model Interpretability and Explainability

Challenges

- Black-Box Nature: LLMs often operate as black boxes, making it difficult to understand how they arrive at specific outputs.

- Trust and Transparency: Lack of transparency can hinder user trust and limit the model’s adoption in sensitive applications (e.g., healthcare, finance).

Mitigation Strategies

- Explainable AI (XAI) Techniques: Use methods like SHAP values, LIME, or attention visualization to provide insights into model decision-making processes.

- User Documentation: Offer clear documentation on model capabilities, limitations, and areas where interpretability tools are applied.

- Simplified Models for Critical Decisions: In high-stakes scenarios, consider using more interpretable models alongside LLMs to provide complementary explanations.

8. Ethical Considerations

Challenges

- Content Moderation: Ensuring that LLMs do not generate harmful, inappropriate, or misleading content.

- Responsible Usage: Preventing misuse of models for malicious purposes such as generating disinformation, phishing, or other harmful activities.

Mitigation Strategies

- Content Filtering: Implement robust filtering systems to detect and block inappropriate or harmful content generated by the model.

- Usage Policies: Define and enforce clear usage policies that prohibit malicious or unethical applications of the model.

- User Education: Educate users about responsible usage practices and the potential risks associated with LLM outputs.

- Ethical Guidelines Compliance: Adhere to established ethical guidelines and frameworks for AI deployment.

9. Integration with Existing Systems

Challenges

- Compatibility: Ensuring that LLMs integrate seamlessly with existing software, databases, and workflows.

- APIs and Interfaces: Developing robust APIs that facilitate smooth communication between the model and other system components.

- Data Pipeline Management: Managing data flow between different stages of processing, from input ingestion to output delivery.

Mitigation Strategies

- Modular Architecture: Design systems with modular components that can be independently developed and integrated.

- Standardized APIs: Utilize standardized API protocols (e.g., REST, gRPC) to facilitate interoperability.

- Comprehensive Testing: Conduct thorough integration testing to identify and resolve compatibility issues before full deployment.

10. Monitoring and Logging

Challenges

- Performance Tracking: Continuously monitoring model performance to detect degradation, anomalies, or unexpected behaviors.

- Error Handling: Identifying and addressing errors or failures in the model’s outputs promptly.

- Usage Analytics: Understanding how users interact with the model to inform improvements and optimizations.

Mitigation Strategies

- Real-Time Monitoring Tools: Implement monitoring solutions that provide real-time insights into model performance metrics such as latency, throughput, and accuracy.

- Comprehensive Logging: Maintain detailed logs of model inputs, outputs, and system states to facilitate troubleshooting and auditing.

- Alert Systems: Set up automated alerts for predefined thresholds to quickly address performance issues or anomalies.

11. Latency in Training and Fine-Tuning

Challenges

- Continuous Learning: For applications requiring up-to-date knowledge, models may need frequent retraining or fine-tuning, leading to increased latency in deployment cycles.

- Resource Allocation: Allocating sufficient resources for ongoing training without disrupting production services.

Mitigation Strategies

- Incremental Learning: Implement incremental or continual learning approaches that allow models to update with new data efficiently.

- Separate Training Environments: Maintain separate environments for training and production to prevent interference and ensure stability.

- Automated Pipelines: Use automated machine learning pipelines to streamline the training and deployment process, reducing latency between updates.

12. Handling Multilingual and Multimodal Inputs

Challenges

- Language Support: Ensuring that LLMs effectively handle multiple languages, dialects, and regional variations.

- Multimodal Integration: Integrating text with other modalities (e.g., images, audio) increases complexity in processing and generating outputs.

Mitigation Strategies

- Comprehensive Training Datasets: Incorporate diverse multilingual and multimodal datasets during training to enhance model versatility.

- Language Detection and Routing: Implement mechanisms to detect input language and route it to appropriate language-specific modules if necessary.

- Modular Multimodal Processing: Design models with specialized components for different modalities, facilitating more efficient and accurate integration.

13. User Privacy and Data Protection

Challenges

- Data Retention: Managing how user data is stored, processed, and retained to prevent unauthorized access or breaches.

- Anonymization: Ensuring that user data is anonymized to protect individual privacy without compromising model performance.

Mitigation Strategies

- Data Minimization: Collect and process only the necessary data required for the application’s functionality.

- Encryption: Use strong encryption methods for data at rest and in transit to secure user information.

- Access Controls: Implement strict access controls and authentication mechanisms to limit data access to authorized personnel only.

14. Handling Out-of-Distribution Inputs

Challenges

- Robustness: Ensuring that LLMs perform reliably when encountering inputs that differ significantly from their training data.

- Unexpected Behavior: Out-of-distribution inputs can lead to unpredictable or erroneous outputs, affecting user trust and system reliability.

Mitigation Strategies

- Diverse Training Data: Train models on diverse datasets to enhance their ability to generalize to varied inputs.

- Anomaly Detection: Implement systems to detect unusual or unexpected inputs and handle them appropriately, such as by flagging for human review.

- Fallback Mechanisms: Design fallback strategies, such as default responses or alternative processing pathways, when the model encounters uncertain inputs.

15. Environmental Impact

Challenges

- Energy Consumption: Training and deploying large models consume significant amounts of energy, contributing to environmental concerns.

- Sustainability: Balancing the benefits of advanced AI with the need for sustainable and eco-friendly practices.

Mitigation Strategies

- Energy-Efficient Practices: Optimize model architectures and training processes to reduce energy consumption.

- Green Hosting Providers: Choose data centers and cloud providers committed to using renewable energy sources.

- Carbon Offsetting: Invest in carbon offset projects to mitigate the environmental footprint of AI operations.

16. Legal and Regulatory Compliance

Challenges

- Emerging Regulations: Navigating a complex and evolving landscape of laws and regulations related to AI and data usage.

- Intellectual Property: Managing intellectual property rights, especially when models are trained on proprietary or copyrighted data.

Mitigation Strategies

- Legal Consultation: Work with legal experts to ensure compliance with relevant laws and regulations.

- Documentation and Auditing: Maintain thorough documentation of data sources, model training processes, and deployment practices to facilitate compliance audits.

- Policy Development: Develop and enforce internal policies that align with legal requirements and ethical standards.

17. User Trust and Transparency

Challenges

- Trust Building: Convincing users to trust and adopt AI-driven solutions, especially when outputs are not always perfect.

- Transparency: Providing clear explanations of how the model works and how decisions are made to build user trust.

Mitigation Strategies

- Explainability: Implementing explainable AI techniques to provide insights into model decisions.

- User Education: Educating users about the capabilities and limitations of AI models to manage expectations.

- Feedback Mechanisms: Incorporating user feedback to improve model performance and build trust over time.

- Transparency: Sharing information about data sources, model architecture, and decision-making processes to enhance transparency.

18. Vendor Lock-In

Challenges

- Dependency: Relying on a single vendor for AI services can lead to vendor lock-in, limiting flexibility and scalability.

- Cost Concerns: Switching vendors can be costly and disruptive, especially if the existing infrastructure is tightly integrated with the vendor’s services.

- Innovation Constraints: Vendor lock-in may hinder innovation and limit access to emerging technologies and solutions.

Mitigation Strategies

- Multi-Vendor Strategy: Diversifying AI service providers to reduce dependency on a single vendor and increase flexibility.

- Interoperability: Ensuring that systems are designed to work with multiple vendors and can easily integrate new services.

- Exit Strategy: Developing contingency plans and exit strategies to mitigate the risks of vendor lock-in and facilitate smooth transitions if needed.

- Open Standards: Supporting open standards and interoperability frameworks to promote vendor-neutral solutions and reduce lock-in risks.

19. Model Governance and Compliance

Challenges

- Model Oversight: Ensuring that models are used responsibly and ethically, with proper governance and compliance measures in place.

- Regulatory Requirements: Meeting legal and regulatory obligations related to data privacy, fairness, and transparency.

- Accountability: Establishing clear lines of responsibility and accountability for model performance and outcomes.

- Ethical Considerations: Addressing ethical concerns and ensuring that models are deployed in a manner consistent with ethical guidelines and societal values.

- Model Lifecycle Management: Managing models throughout their lifecycle, from development and training to deployment and retirement.

Mitigation Strategies

- Model Governance Framework: Establishing a governance framework that outlines roles, responsibilities, and processes for model oversight and compliance.

- Compliance Monitoring: Implementing monitoring and auditing mechanisms to ensure that models adhere to legal and regulatory requirements.

- Ethics Review: Conducting ethics reviews of models to assess potential risks, biases, and ethical

- Transparency and Accountability: Promoting transparency and accountability in model development and deployment, including clear documentation, explainability, and user feedback mechanisms.

20. Model Robustness and Security

Challenges

- Adversarial Attacks: Models are vulnerable to adversarial attacks that can manipulate inputs to produce incorrect or malicious outputs.

- Data Poisoning: Malicious actors can inject poisoned data into the training set to compromise model performance.

- Privacy Risks: Models may inadvertently leak sensitive information or expose vulnerabilities that can be exploited by attackers.

- System Vulnerabilities: Weaknesses in the model architecture or deployment infrastructure can be exploited to compromise system security.

Mitigation Strategies

- Adversarial Training: Training models to be robust against adversarial attacks by incorporating adversarial examples during training.

- Data Sanitization: Implementing data validation and sanitization processes to detect and remove poisoned data from training sets.

- Secure Deployment: Ensuring that models are deployed in secure environments with appropriate access controls, encryption, and monitoring.

- Threat Detection: Implementing threat detection mechanisms to identify and respond to security threats, including adversarial attacks and data breaches.

- Regular Audits: Conducting regular security audits and penetration testing to identify vulnerabilities and ensure compliance with security best practices.

Silpa brings 5 years of experience in working on diverse ML projects, specializing in designing end-to-end ML systems tailored for real-time applications. Her background in statistics (Bachelor of Technology) provides a strong foundation for her work in the field. Silpa is also the driving force behind the development of the content you find on this site.

Subscribe to our newsletter!