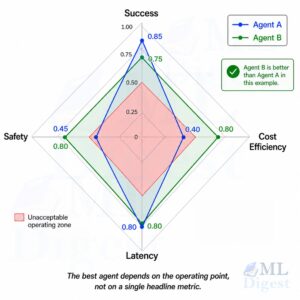

How to Evaluate Agentic Systems: Methods, Metrics, and Best Practices

Imagine hiring a new teammate who can search the web,...

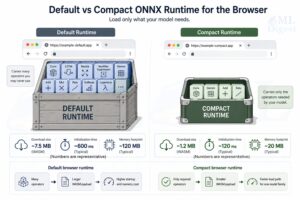

Read MoreONNX Runtime Compaction: How to Make Browser ML Fast, Lightweight, and Practical

Imagine trying to deliver a single cup of coffee by...

Read MoreDeploying ONNX Models Made Easy: A Practical Step-by-Step Tutorial

ONNX (Open Neural Network Exchange) is a standard, open-source format...

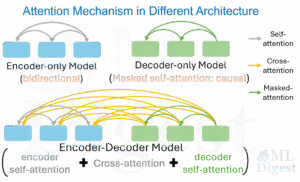

Read MoreAttention Mechanisms Made Easy: All Types Explained in One Post

In ML, attention is the mechanism that lets a model...

Read More