LLMs handle out-of-vocabulary (OOV) words or tokens by leveraging their tokenization process, which ensures that even unfamiliar or rare inputs are represented in a way the model can understand. Here’s how it works:

1. Tokenization Techniques:

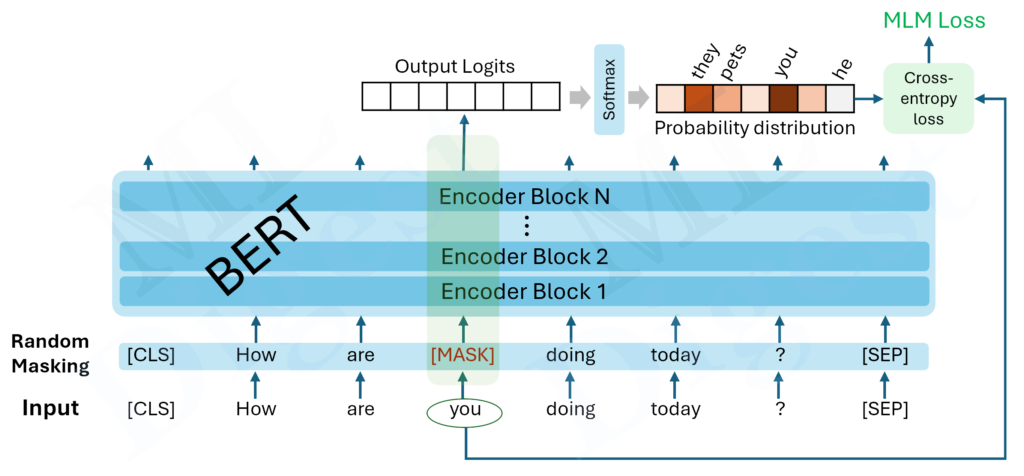

- Subword Tokenization (e.g., Byte Pair Encoding (BPE), WordPiece, Unigram):

- GPT or BERT use subword tokenization methods that break down words into smaller units (subwords). For example:

- The word “unfamiliar” might be split into

["un", "familiar"]. - A rare word like “autodidactism” might be split into

["auto", "did", "act", "ism"]. - This ensures that even if a word isn’t in the model’s vocabulary, its components are, allowing the model to process it effectively.

- Byte-Level Tokenization:

- Considers each byte (0-255) as a potential token.

- Models tokenize inputs at the byte level, allowing them to handle any text input, including misspellings, rare words, or text in various languages.

- For instance, “🤖AI🌍” would be tokenized into individual byte-level tokens representing emojis and characters.

2. Embedding and Context Understanding:

- Each token (subword or byte) is mapped to an embedding in a high-dimensional space.

- Even if the model encounters a novel word or token combination, the embeddings of its components allow it to infer meaning based on contextual patterns learned during training.

3. Handling Entirely Novel Inputs:

- Misspelled or Noisy Inputs:

- These are broken into tokens the model knows, which might still capture some semantic information. For example, “autdoidactism” could be tokenized similarly to “autodidactism.”

- Code or Specialized Notation:

- LLMs trained on diverse datasets (e.g., code snippets, technical writing) can handle uncommon tokens by identifying familiar patterns or structures.

4. Limitations:

- Loss of Semantic Specificity:

- If a rare or completely novel word is tokenized into many smaller units, its specific meaning might not be fully captured.

- Tokenization Overhead:

- Rare or OOV words may require multiple tokens, increasing computational cost.

- Dependence on Training Data:

- If the model hasn’t encountered similar patterns in training, its ability to infer meaning may be limited.

Example:

- Input: “ChatGPT is great for autodidactism!”

- Tokenization (BPE):

["Chat", "GPT", "is", "great", "for", "auto", "did", "act", "ism"] - Model uses embeddings for each token and context to process the sentence.

By tokenizing inputs into manageable units and leveraging context-aware embeddings, LLMs can handle OOV words effectively, even if not perfectly.

Silpa brings 5 years of experience in working on diverse ML projects, specializing in designing end-to-end ML systems tailored for real-time applications. Her background in statistics (Bachelor of Technology) provides a strong foundation for her work in the field. Silpa is also the driving force behind the development of the content you find on this site.

Subscribe to our newsletter!