RoPE Made Easy: Understanding Rotary Positional Embeddings Step by Step

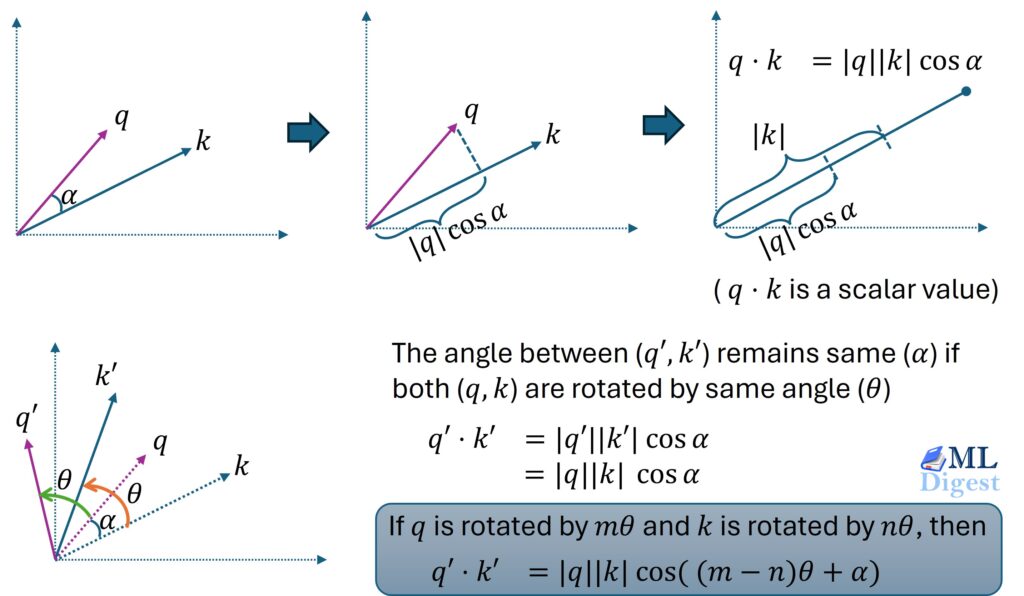

Rotary Positional Embeddings represent a shift from viewing position as a static label to viewing it as a geometric relationship. By treating tokens as vectors rotating in high-dimensional space, we allow neural networks to understand that “King” is to “Queen” not just by their semantic meaning, but by their relative placement in the text.

RoPE Made Easy: Understanding Rotary Positional Embeddings Step by Step Read More »