Introduction: The Broken Yardstick

Imagine you are a textile factory manager in the early 19th century. For years, your entire measurement system has been simple: you evaluate your weavers based on how many threads they can manually weave per hour. The math is easy, the metric is clear, and the best workers are obviously the fastest ones.

Suddenly, the power loom is introduced to your factory floor.

Overnight, a novice employee operating a power loom can produce ten times the fabric of your most skilled manual weaver. If you continue to evaluate your employees based on the old metric of “manual thread counts,” your system breaks completely. You will end up rewarding the person who pushes the machine’s start button the fastest, rather than the person who designs the intricate patterns, maintains the delicate machinery, or ensures the quality of the final cloth.

Today, we are living through the digital equivalent of the power loom moment.

Artificial Intelligence—through tools that write boilerplate code, draft marketing emails, summarize vast reports, and generate complex images—is changing the nature of work. In this new reality, traditional metrics like “lines of code written,” “words produced,” or “hours spent drafting” are rapidly becoming obsolete.

This shift is causing understandable anxiety. Employees worry their unique skills are being devalued, while companies are struggling to define what “high performance” looks like when a machine does the heavy lifting.

If we want to thrive in this new era, we must rebuild our evaluation systems from the ground up. We need to shift our focus from measuring output to measuring outcome.

The Intuition: Shifting from Output to Outcome

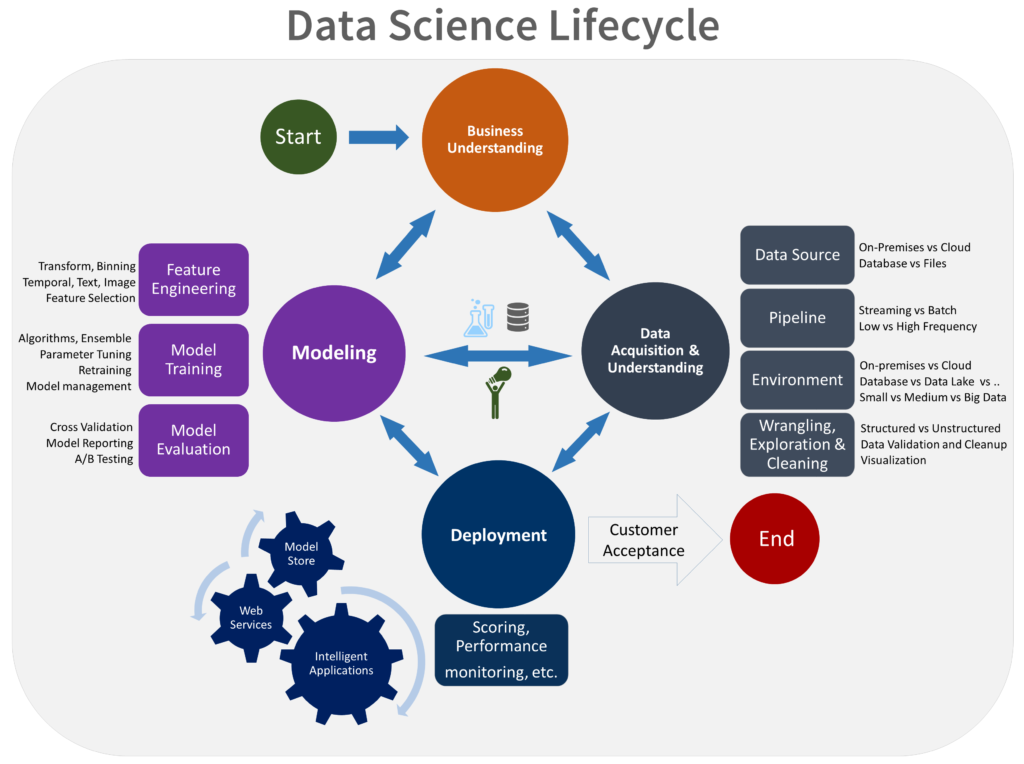

To understand how to evaluate performance today, we need to visualize how the workflow has changed. The fundamental difference lies in where human effort is best applied.

The Pre-AI Era: The Linear Path of Execution

Until recently, knowledge work followed a fairly linear path. A human received a task and spent the vast majority of their time—perhaps 80%—on manual execution.

- The coder typed out syntax line by line.

- The writer stared at a blank page and drafted sentences.

- The analyst crunched numbers manually in a spreadsheet.

Only about 20% of the time was left for high-level review or strategy. The measurement focus was naturally on the Output: the sheer volume of things produced during that 80% execution phase.

The AI Era: The Cycle of Curation and Architecture

In the AI era, this dynamic flips entirely. The AI now handles the heavy lifting of execution.

- The new workflow: A human receives a strategic goal. They spend perhaps 20% of their time formulating the right “prompts” or instructions for the AI. The AI then handles the bulk of the execution instantly.

- The human’s new role: The remaining 80% of human time is now freed up for architectural design, critical review, refinement, and integration.

The goal is no longer just an Output; it is a high-quality Outcome.

In this new paradigm, the employee’s role shifts from being primarily a creator to being a curator and architect. Therefore, evaluation systems that measure how hard someone works at typing are useless. We must measure how effectively they think.

The Four Pillars of Modern Performance Evaluation

If we can’t measure lines of code or word counts, what do we measure? A robust system for evaluating employee performance in the AI age should rely on four key pillars that assess higher-order skills.

1. AI Leverage (The Efficiency Accelerator)

It is no longer a “cheat” to use AI to do your job faster; it is an expectation. We must evaluate employees on how effectively they utilize these tools to bypass mundane work.

- Obsolete Metric: “How long did it take you to write this report from scratch?”

- Modern Metric: “How quickly did you convert a raw data set into an actionable report using AI tools, and how effectively did you automate the repetitive parts of the process?”

- What to look for: Employees who proactively build libraries of effective prompts, automate workflows, and use AI to clear the “blank page problem” instantly, allowing them to get to the real work faster.

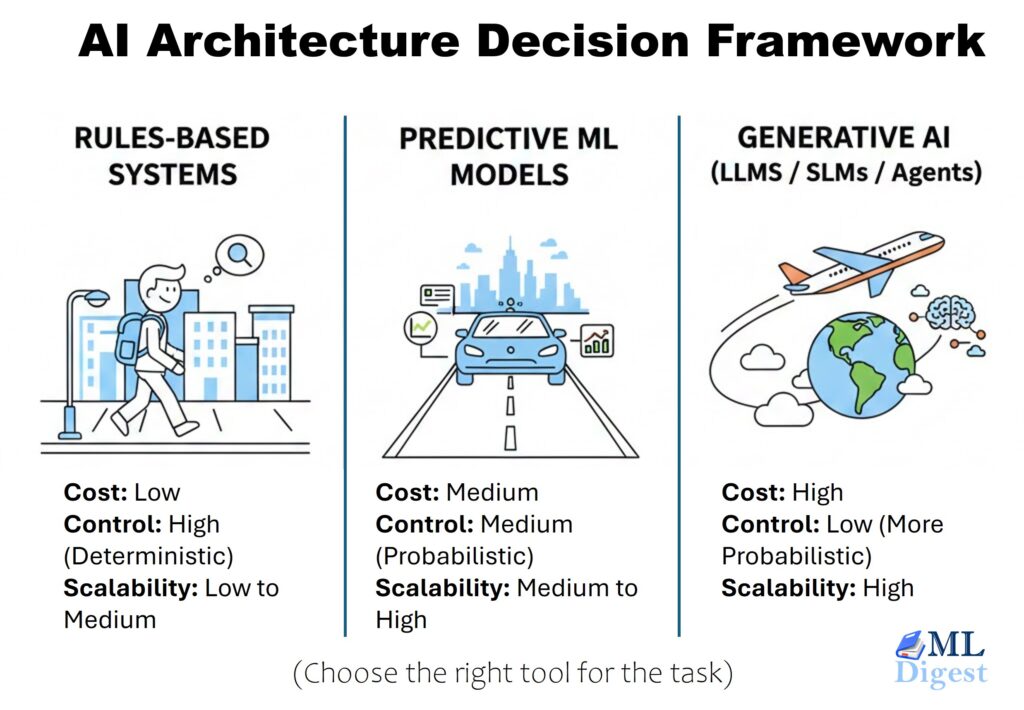

2. Architectural Complexity (The Strategic Mind)

When AI solves easy problems instantly, the value of a human employee is defined by their ability to solve hard problems. Performance should be judged by the complexity and ambiguity of the challenges an employee tackles.

- Obsolete Metric: “How many individual tasks did you complete today?”

- Modern Metric: “How well did you design the overall system architecture that integrates multiple AI-generated components into a cohesive whole?”

- What to look for: The ability to handle high-level abstraction, connect seemingly unrelated dots, and design long-term strategies that AI cannot yet formulate on its own.

3. Quality, Reliability, and Empathy (The Human Touch)

Generative AI is infamous for confidently producing inaccurate information (hallucinations) or tonally deaf content. The human’s critical role is as the final gatekeeper of quality.

- Obsolete Metric: “Is the document free of typos?” or “Does the code compile?”

- Modern Metric: “Is the outcome factually accurate, secure, unbiased, and genuinely useful to the end-user?”

- What to look for: Rigorous fact-checking of AI outputs, deep attention to cybersecurity implications in AI-generated code, and the ability to inject human empathy and brand voice into AI-drafted communications.

4. Collaboration and Leadership (The Multiplier Effect)

In an AI world, the “lone wolf” genius is less valuable than the leader who raises the technological water level for the entire team. Knowledge hoarding is out; knowledge scaling is in.

- Obsolete Metric: Individual stack ranking based on personal output.

- Modern Metric: “How effectively have you created workflows, prompt libraries, or guidelines that help the rest of the team use AI better?”

- What to look for: Mentorship on how to critique AI outputs, fostering a culture of safe experimentation with new tools, and leading cross-functional teams where human expertise and AI capabilities intersect.

Conclusion

Returning to our power loom analogy: the invention of industrial machinery didn’t eliminate the need for human workers, but it radically changed what made a worker valuable. The weavers who succeeded weren’t the ones who tried to out-stitch the machines; they were the ones who learned to maintain them, fix them when they jammed, and design the complex patterns the machines produced.

AI is not here to replace human judgment; it is here to scale it. By shifting our performance metrics from brute-force output to high-value outcomes, companies can alleviate employee anxiety and unlock the true potential of this new technological era.

Happy is a seasoned ML professional with over 15 years of experience. His expertise spans various domains, including Computer Vision, Natural Language Processing (NLP), and Time Series analysis. He holds a PhD in Machine Learning from IIT Kharagpur and has furthered his research with postdoctoral experience at INRIA-Sophia Antipolis, France. Happy has a proven track record of delivering impactful ML solutions to clients.

Subscribe to our newsletter!