Nexa AI unveiled the OmniVision-968M, a compact multimodal model engineered to handle both visual and text data. Designed with edge devices in mind, this advancement marks a significant milestone in the artificial intelligence landscape.

Architecture Overview

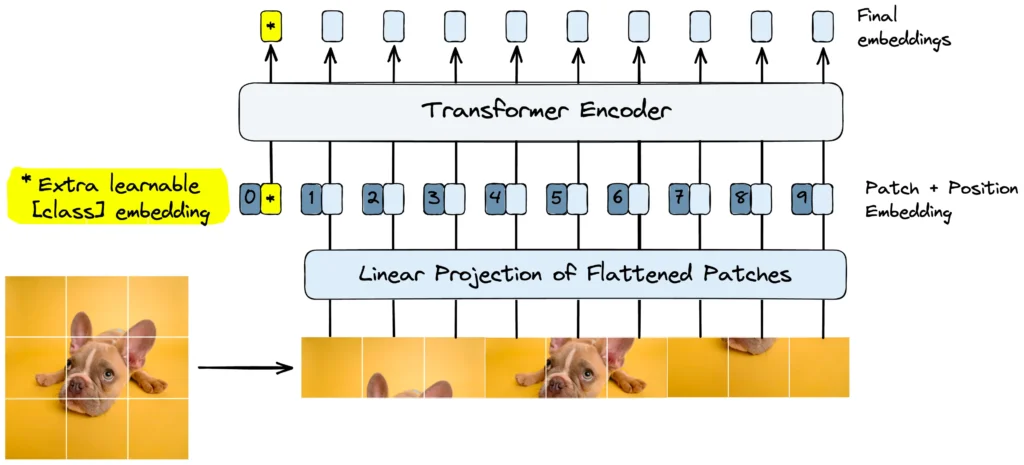

OmniVision’s architecture is composed of three primary components:

- Base Language Model: Uses Qwen2.5-0.5B-Instruct to process text inputs.

- Vision Encoder: SigLIP-400M operates at 384×384 resolution with 14×14 patch size to generate image embeddings.

- Projection Layer: Aligns these embeddings using a multi-layer perceptron (MLP), enabling comprehensive visual-language understanding.

Training Process

- Pretraining: Only the projection layer parameters was trained (others frozen) on image-caption pairs to establish basic visual-linguistic connections.

- Supervised Fine-tuning (SFT): The model’s contextual understanding was improved using image-based question-answering datasets and structured chat histories involving images.

- Direct Preference Optimization (DPO): First, the model generates responses to images. A teacher model then creates minimally edited corrections that retain high semantic similarity with the original responses, emphasizing accuracy-critical elements. The model was then fine-tuned to prioritize these original and corrected outputs.

Technical Specifications

The FP16 version of OmniVision requires the following:

- RAM: 988 MB

- Storage Space: 948 MB

To run OmniVision, users can install the Nexa SDK and execute commands in their terminal or utilize a Streamlit local UI for easier interaction.

Performance Improvements

A major challenge in deploying multimodal models on edge devices is the high computational load from processing image tokens. The conventional LLaVA model processes each image into 729 tokens, a 27×27 grid. This large token count results in significant processing delays and high computational requirements.

OmniVision overcomes this by implementing a reshaping mechanism that significantly reduces the token count—from $$[batch_size, 729, hidden_size]$$ to $$[batch_size, 81, hidden_size*9]$$. This reduction not only boosts performance but also ensures more efficient processing without compromising accuracy.

In comparative tests across benchmark datasets such as MM-VET, ChartQA, and ScienceQA, OmniVision consistently outperformed its predecessor, nanoLLAVA, in various tasks:

| Task | Nexa AI Omni-Vision | nanoLLAVA | Qwen2-VL-2B |

|---|---|---|---|

| MM-VET | 27.5 | 23.9 | 49.5 |

| ChartQA (Test) | 59.2 | N/A | 73.5 |

| MMMU (Test) | 41.8 | 28.6 | 41.1 |

| ScienceQA (Eval) | 62.2 | 59.0 | N/A |

| POPE | 89.4 | 84.1 | N/A |

| Although OmniVision is currently in the early stages of development, the Nexa AI team is dedicated to addressing existing limitations and fine-tuning the model for production-ready applications in edge AI multimodal environments. |

Silpa brings 5 years of experience in working on diverse ML projects, specializing in designing end-to-end ML systems tailored for real-time applications. Her background in statistics (Bachelor of Technology) provides a strong foundation for her work in the field. Silpa is also the driving force behind the development of the content you find on this site.

Subscribe to our newsletter!